This year I had the privilege of attending Clojure/Conj in Alexandria, VA. Alexandria is a beautiful city, with a museum seemingly on every corner, and abundant trees just starting to turn yellow for the fall. It has an efficient metro system and a safe, welcoming atmosphere.

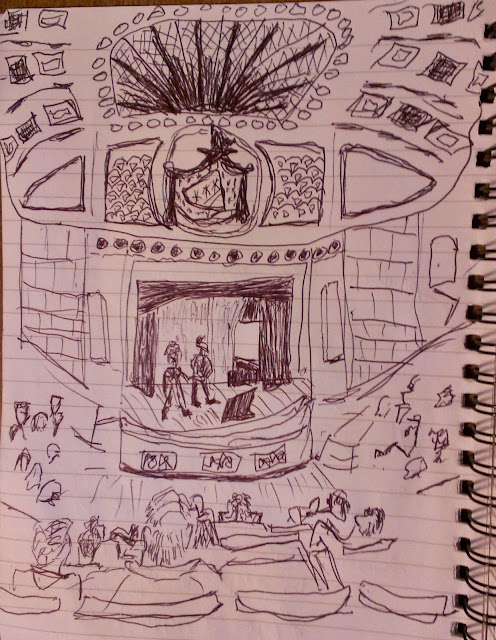

The conference was held in the unique George Washington Masonic Memorial. The venue has an impressive theater with quirky side rooms and hallways. The walls and alcoves were adorned with historical artifacts. The unusual and segmented layout leant itself to longer breakout discussions, with perhaps a little less broad social mixing as people were more spread out in clusters. The conference started with a buzz of energy, and settled into something of a serene atmosphere as most attendees settled into the proceedings - happy to be there, meet up, and enjoy the experience.

Some themes were: Visualization, tooling, collaboration, and quick responses to issues raised in libraries, as exemplified by Borkdude is everywhere! And it was revealed that Babashka is used by 90% of Clojure survey responders. Many discussions revolved around Portal, Cursive, Calva, and whitespace formatting. Many tool makers were taking the opportunity to brainstorm and collaborate closely.

Rich Hickey’s welcome address set a positive, thoughtful tone for the conference. All of the talks were excellent. If you only have time for one talk, I highly recommend watching "Science in Clojure: A Bird's Eye View" by Thomas – an engaging talk on scientific discovery and systematic innovation presented with a generous splash of humor. Make sure to catch them on ClojureTV.

The games night was a hit as always, with plenty of socializing, fun, and competitive strategies employed.

Conversations ranged from whitespace management in code formatting to the potential of brainwave interfaces. The Clojure community's diversity and creativity were on full display. I received lots of encouragement for Hummi.app, many people voiced their hope for a better diagramming app and were really interested in the details of what it does, and had great suggestions.

A big thank you to NuBank and the organizers for making this fantastic event possible.

What follows are my notes about the people I met and the things they were working on:

James Afterglow algorithmic light shows in Clojure.

Chase self taught Clojure as his first programming language. He chose Clojure to go straight to the good stuff. Previously an English literature teacher.

Samuel at Kevel working on advertisement serving. Glad to be working in Clojure as it is a much more enjoyable challenge to previous work in mechatronics hardware. Eager to see technology move forward and hopes that we can be more ambitious about creating amazing things.

Jeaye building Jank. Finding a lot of community support, and even more interest from outside the Clojure community.

Hanna coding Clojure 6 years at Nubank, enjoys the work and the team.

Francis is in Datom heaven at Nubank with more data than ever.

Tim S had a great take on the legend of bigfoot and why it was so popular on the west coast.

Hadil, long time Clojure user, first time Conj attendee looking forward to meeting David Nolan and Rich Hickey. Building a react native app in ClojureScript for matching yard work providers with customers. Starting to contribute to the Jank project.

Michael has been enjoying the Microsoft automatic graph layout. Search and replace would be great in a diagram app. Clerk style file based editing of a graph. Should the file be GraphQL or SVG, or maybe another source of truth? Observed astutely that Stu is a man of many disguises, he looks different every time he appears.

Anonymously overheard: “Borkdude just fixed my issue, I only submitted an issue 20 min ago” x2

> I heard several comments about library maintainers being responsive, and libraries being generally stable.

Chris O managing whitespace conflicts is not your job, let’s all move on.

> I’d love to not have to deal with whitespace when collaborating. It’s a pain, and unproductive.

Chris B meeting with many users of Portal. Would love to appear inline with Cursive's new inline evaluation output.

> I’d love to be able to display HTML inline, hopefully kindly visualizations as well.

Chris H has a book project underway.

> Joy of Clojure is a popular recommendation as a book that makes you think. Maybe a new edition is on the way?

Ivan on raising kids in the big city. My daughter on vacation asks “where are the tall buildings?”

Osei and Eli were deep in consultation with the Datomic team.

Toby is looking forward to a trip to Italy in April.

Lauren recently completed a GraphQL from REST refactoring.

Paul is solving sleep apnea at sovaSage. Their team and customer base is growing.

Kelvin works with Milt on a military training system.

> I remember meeting Milt at a Conj nearly a decade ago when he recommended I look into SVG when I was first thinking of making diagrams.

Colin inline Cursive evaluation and separate prompting plugin. Intellij code formatting is a constraint solver, is rule (3) a problem? Cljfmt kind of breaks it. Would like to standardize, but will need to re-implement according to the constraint solver. I hope Claude can help him get that done.

> Such a large impact on so many programmers

Pez is enjoying replicant and portfolio. postscript was the most dynamic LiSP. He hopes that Clojure formatting can standardize, and that he can promote a default in Calva.

> Great to see Pez and Colin together discussing how to implement rule 3. Both wanting to improve the compatibility of default formatting across editors.

David N is now at DRW, sifting through layers of Clojure archaeology. Oh that was popular, then this, then that. Seeing slices of history of when certain patterns were in favor.

> Nothing ever truly goes out of style in Clojure it seems.

Brandon is leveraging machine learning and making mindful investments.

Rich importance of ideas, fun, planting seeds of community outside the current borders. Go there and share. Enable creative people, optimists. Retired from Nubank, but still working on Clojure with the core team.

> Having the opportunity to shake Rich’s hand and hear his thoughts on community building was inspiring.

Aaron has a graph layout constraint system. Working on compute services at Equinix. Looking forward to visiting the Smithsonian National Air and Space Museum.

Dustin is building Electric Clojure with his team. They are available for consulting work to help companies develop applications using their technology to drastically reduce the code for client/server functionality.

> It’s amazing what they have achieved with Electric Clojure, I wish I had a commercial application to try it on. Such a principled approach focused on delivering value without incidental complexity.

Joe gave some insights on dealing with scale at Nubank, and the advantages of being able to preload data for queries.

Nacho is engineering manager at Splash addressing student debt and marginal loans.

> Nacho has a lot of empathy for those in need, this seems like a great fit.

Thomas’ talk - entertaining, informative and engaging, new testable knowledge, discovery, systematic exploration. Wolframite. Undeclared dependency on prayer. What quantum mechanics tells us about distributed systems. Dead plots, and the role Clay has in solving that. The trend of native Clojure libraries replacing python interop. Order vs Chaos - voluntary cooperation. Science in a nutshell. Emmy for symbolic math. Responsiveness of the community, generate me added Ince on request.

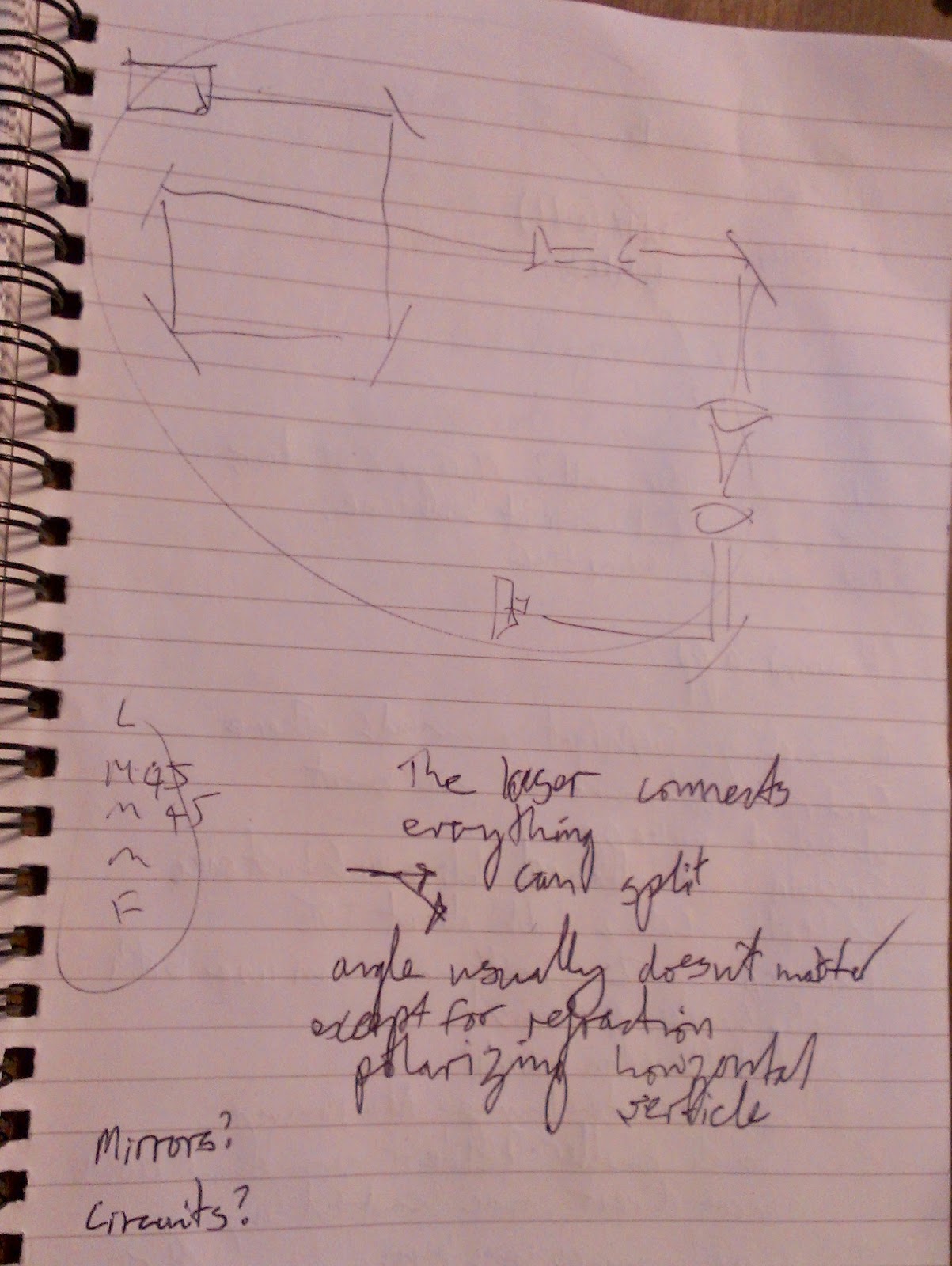

Designing experiments with mirrors and lasers. The laser connects everything. Can split the beam. Arrangement usually doesn’t matter except when refracting or polarizing. Similar to electric circuits?

Wesley commented on the absence of graph libraries in Clojure.

> Loom (no longer maintained) and Ubergraph are good, but many algorithms only have reference implementations in C and JavaScript.

Kira at Broadpeak (commodities trading) working with a heap of data. Building a grammar of graphics for ggplot style visualizations. Sits between very specific charts and basic rendering like SVG. Good for combining visualizations.

> ggplot uses functions that build data as a concise way to express visualizations.

Adam interested in using kindly-render as a way to embed visualizations in badspreadsheet.

Andrew thing.geom needs a new maintainer!

Lucas transpiling and resurrecting out of circulation games, job hunting for junior roles is difficult when your current job title is senior.

Cam is looking forward to Halloween. Had 700 trick or treaters last year, and now has a giant spider attraction set up in anticipation. When we find a bug in HoneySQL, Sean has already fixed it by the time we report it.

Sean recommended reading The Socratic Method (Ward Farnsworth). Rich recommended it in his talk last year Design in Practice, and Sean has found it useful for organizing thinking.

Sofia had read Socrates, going into a masters program human computer interaction through brainwaves, looking to improve the isolation of wave patterns.

> I hope somebody proposes a talk on the Socratic method next Conj

Quetzaly interested in joining Clojure Camp (https://clojure.camp/). This was the first time I’d heard of Clojure Camp so Sofia showed us how it works. It’s based around pairing mentors and mentees. You can choose how many times you’ll be paired, what times work for you, and what topics you are interested in. You opt in every week that you’d like to continue.

> So great to have this as a way for people to pair up and work together on something productive.

Torstein fish feed management and automation. Norway has 2 written languages Bokmål and Nynorsk, and overlaps languages with Swedish and Danish. There are many dialects. The Sámi people live in Norway, Sweden and Russia and have yet another language. Right now they cannot cross the Russian border. Learning more languages is a good thing, you learn to express more, be more creative. Nationalism and a drive to have one language has had the opposite, divisive effect.

> I am astonished at how complex the interactions of language and culture can be.

David Y building a tax accounting startup.

> Datascript with Firebase backend remains a popular stack, but relies on custom implementations.

Carin had a book recommendation “Slow Horses”. LLMs can help us think. She hopes the next generation uses them to improve thinking instead of replacing thinking.

> The next generation is growing up in such a different learning environment, I hope they can think better than we ever could.

Jay is working at Viasat on DHCP network management.

Clojure/Conj 2024 left me inspired and grateful for the connections I made. The conference highlighted the language's evolution, its vibrant community, and its potential to shape the future of technology. I'm excited to see where the Clojure journey leads us next!